Discretization of the input domain

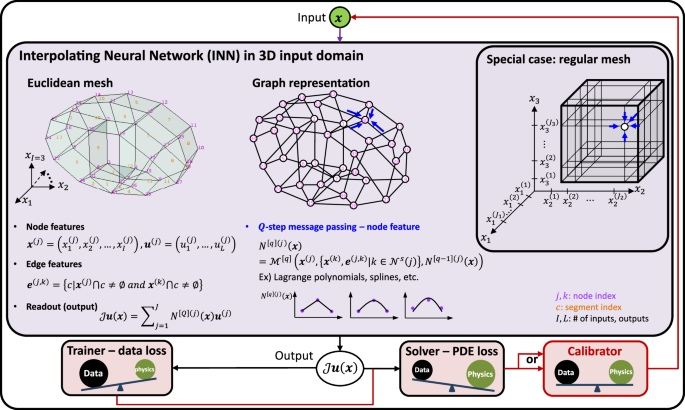

Consider a regression problem that relates I inputs and L outputs. The first step of an INN is to discretize the input domain in the I-dimensional Euclidean space into a mesh, which can be as general as an unstructured irregular mesh or as specific as a structured regular mesh. In any case, a mesh in the Euclidean space can be readily represented as a graph—the most general form of a discretized input—as illustrated in Fig. 1. The graph nodes in an INN are arbitrary points in the input domain, which are irrelevant to the data structure. This is a key distinction of an INN from the graph neural network (GNN), which will be elaborated upon in the following discussion.

Suppose there are J nodes (or vertices) and E edges in the graph, where the input domain is discretized with C non-overlapping segments. Each segment occupies a subspace of the I-dimensional input domain. Each node (e.g., node j) has features: nodal coordinates \(({{{\boldsymbol{x}}}}^{(j)}\in {{\mathbb{R}}}^{I})\) and values \(({{{\boldsymbol{u}}}}^{(j)}\in {{\mathbb{R}}}^{L})\). The superscript with parentheses refers to the graph node index. An edge that links node j and k has a feature e(j, k) that stores indices of segments connected to the edge. For example, when I = 3, L = 2, node 10 in Fig. 1 stores \({{{\boldsymbol{x}}}}^{(10)}=({x}_{1}^{(10)},{x}_{2}^{(10)},{x}_{3}^{(10)})\) and \({{{\boldsymbol{u}}}}^{(10)}=({u}_{1}^{(10)},{u}_{2}^{(10)})\). The edge connecting nodes 30 and 12 stores e(30, 12) = {5, 7}.

Construction of INN interpolation functions via message passing

Once the input domain is properly discretized, INN message passing is conducted to build an interpolation function on each graph node. A typical message passing in a graph-based neural network returns a hidden state for each graph node, which is essentially a tensor (including matrix and vector)24. In contrast, the INN message passing returns a function (i.e., interpolation function N(j)(x), or often called shape function in FEM) for each node (j = 1, …, J) as a hidden state. A general Q-step message passing of an INN, \({{{\mathcal{M}}}}^{[q]}(*)\), can be expressed as:

$${N}^{[q](j)}({{\boldsymbol{x}}}) ={{{\mathcal{M}}}}^{[q]}\left({{{\boldsymbol{x}}}}^{(j)},\{{{{\boldsymbol{x}}}}^{(k)},{{{\boldsymbol{e}}}}^{(j,k)}| k\in {{{\mathcal{N}}}}^{s}(j)\},{N}^{[q-1](j)}({{\boldsymbol{x}}})\right),\\ q =1,\cdots \,,Q,\qquad {N}^{[0](j)}({{\boldsymbol{x}}})=0$$

(1)

where \({{{\mathcal{N}}}}^{s}(j)\) is a set of neighboring nodes of the center node j with s connections (i.e., s-hops, see Fig. 3 and Supplementary Information (SI) Section 1.1 for high-dimensional cases). The operation \({{{\mathcal{M}}}}^{[q]}(*)\) constructs an interpolation function N[q](j)(x) for a graph node x(j) using neighboring nodal coordinates and edge information, x(k), e(j, k), and the interpolation function of the previous message passing, N[q−1](j)(x).

The function N[Q](j)(x) is the INN interpolation function at node j after Q message passing. The \({{{\mathcal{N}}}}^{s}(j)\) is a set of neighboring nodes of the center node j with s connections (or s-hops).

It is worth noting that the interpolation functions used throughout this article satisfy the Kronecker delta property, i.e., N[q](j)(x(k)) = δjk, meaning that the INN function space exactly passes the interpolation points. However, this condition may be relaxed depending on the specific problem and the chosen interpolation method. For instance, if the training data is highly noisy or spline basis functions are used, this condition may no longer be necessary.

With a single message passing (Q = 1) and s = 1 hop, the INN message passing degenerates to the standard FEM linear shape function (or Lagrange polynomials of order P = 1). As illustrated in Fig. 3 (left), it is the most localized approximation of an INN. In this case, the message passing operation at node j = 3 is written as:

$${N}^{[Q=1](j=3)}(x) ={{{\mathcal{M}}}}^{[Q=1]}\left({x}^{(j=3)},\{{x}^{(k)},{{{\boldsymbol{e}}}}^{(j=3,k)}| k\in {{{\mathcal{N}}}}^{s=1}(j=3)\}\right),\\ =\left\{\begin{array}{ll}\frac{x-{x}^{(2)}}{{x}^{(3)}-{x}^{(2)}} & x\in {A}^{(2)}\\ \frac{{x}^{(4)}-x}{{x}^{(4)}-{x}^{(3)}} & x\in {A}^{(3)}\\ 0\hfill &\,{{\mbox{otherwise}}}\end{array}\right.$$

(2)

where A(c) refers to the region of segment c; e.g,. A(2) = [x∣x(2) ≤ x ≤ x(3)].

One can progressively enlarge the support domain and the approximation capability of an interpolation function by adding message passing with a higher hop. For instance, the second message passing with s = 2 hop constructs compact-supported interpolation functions with higher nonlinearity. This is theoretically equivalent to the generalized finite element method (GFEM)29 and convolution hierarchical deep-learning neural networks (C-HiDeNN)7,20. The message passing with a max-hop (Fig. 3, right) can even make it a non-local approximation where the support of interpolation functions occupies the entire input domain, mimicking meshfree methods that exhibit superconvergence (i.e., faster convergence rate than the complete order of polynomial basis)30,31,32. Depending on the choice of interpolation technique, other hyperparameters can be involved in \({{{\mathcal{M}}}}^{[q]}(*)\), such as the activation (or basis) function and dilation parameter7,20. This enables adaptive update of INN interpolation functions during training to improve accuracy (see SI Section 2.2). A more comprehensive discussion on functional approximation through interpolation theory and subsequent solvers can be found in refs. 33,34,35. See SI Section 1 for various choices of the message passing operation.

Regardless of the choice of interpolation technique for the message passing, the global DoFs (i.e., number of nodes) remain constant. INNs adapt nodal connectivity through the adjacency matrix and basis functions to reproduce almost any interpolation technique available in numerical methods. The compact-supported interpolation functions enable INNs to be optimized locally and promptly, making INNs distinguishable from MLPs. Since an MLP is a global approximator, INNs with compact-supported interpolation functions 1) converge faster than MLPs with the same number of trainable parameters, and 2) facilitate training on a sparse dataset.

INN forward pass

During the forward propagation, an input variable \({{\boldsymbol{x}}}\in {{\mathbb{R}}}^{I}\) enters each graph node’s interpolation function N[Q](j)(x), followed by a graph-level readout operation:

$${{\mathcal{J}}}{{\boldsymbol{u}}}({{\boldsymbol{x}}})={\sum }_{j=1}^{J}{N}^{(j)}({{\boldsymbol{x}}}){{{\boldsymbol{u}}}}^{(j)},\quad {{\boldsymbol{x}}}\in {{\mathbb{R}}}^{I},{{\boldsymbol{u}}}\in {{\mathbb{R}}}^{L},$$

(3)

where the superscript [Q] is dropped for brevity (i.e., N[Q](j)(x) = N(j)(x)). The interpolation operator \({{\mathcal{J}}}\) will designate an interpolated field output throughout this paper. The readout operation can be written as a matrix multiplication:

$${{\mathcal{J}}}{{\boldsymbol{u}}}({{\boldsymbol{x}}})=\left[\begin{array}{cccc}| &| &| &| \\ {{{\boldsymbol{u}}}}^{(1)}&{{{\boldsymbol{u}}}}^{(2)}&\cdots \,&{{{\boldsymbol{u}}}}^{(J)}\\ | &| &| &| \end{array}\right]\cdot \left[\begin{array}{c}{N}^{(1)}({{\boldsymbol{x}}})\\ {N}^{(2)}({{\boldsymbol{x}}})\\ \vdots \\ {N}^{(J)}({{\boldsymbol{x}}})\end{array}\right]={{\boldsymbol{U}}}{{\bf{{{\mathcal{X}}}}}}(x),$$

(4)

where \({{{\boldsymbol{u}}}}^{(j)}\in {{\mathbb{R}}}^{L},{{\boldsymbol{U}}}\in {{\mathbb{R}}}^{L\times J},{{\bf{{{\mathcal{X}}}}}}(x)\in {{\mathbb{R}}}^{J}\). The matrix U is a horizontal stack of nodal values u(j), while \({{\bf{{{\mathcal{X}}}}}}(x)\) is a vectorized function of x that is parameterized with nodal coordinates x(j) during the message passing operation.

The graph node features (i.e., coordinate (x(j)) and value (u(j))) are trainable parameters of the INN. If the nodal coordinates (x(j)) are fixed, one can find nodal values (u(j)) without changing the discretization of the input domain. If the nodal coordinates are also updated, the optimization will adjust the domain discretization similar to r-adaptivity in FEM17,18. Once the forward propagation is defined, the loss function is chosen based on the problem type: training, solving, or calibrating (see Method Section for details).

TD for model order reduction and scalability

When the input domain is discretized with a regular mesh, as illustrated in the special case of Fig. 1, we can significantly reduce the trainable parameters (or degrees of freedom, DoFs) by leveraging TD23 that makes INN scalable. Here, we introduce the two widely accepted TD methods: Tucker decomposition36,37 and CANDECOMP/PARAFAC (CP) decomposition38,39,40.

One of the widely used TD methods is Tucker decomposition36,37. It approximates a high-order tensor with Ji nodes in i-th dimension as a tensor contraction between dimension-wise matrices and a core tensor \({{\bf{{{\mathcal{G}}}}}}\), which has the same order of the original tensor but with smaller nodes Mi (<Ji). The Mi is often called a “mode” to distinguish it from the original node Ji.

Consider a three-input (I = 3) and one output (L = 1) system, and assume the input domain is discretized with J = J1 × J2 × J3 nodes, as shown in the special case box of Fig. 1. To facilitate tensor notation, the nodal values will be denoted with left/right super/sub scripts, \(\begin{array}{l}m\\ i\end{array}{u}_{l}^{(j)}\), where \(i\in {{\mathbb{N}}}^{I}\) is the input index, \(m\in {{\mathbb{N}}}^{{M}_{i}}\) is the mode index, \(l\in {{\mathbb{N}}}^{L}\) is the output index, and \(j\in {{\mathbb{N}}}^{{J}_{i}}\) is the nodal index.

The interpolated field \({{\mathcal{J}}}u({{\boldsymbol{x}}})\in {{\mathbb{R}}}^{L=1}\) can be represented as a Tucker product:

$${{\mathcal{J}}}u({{\boldsymbol{x}}}) =[\![{{\bf{{{\mathcal{G}}}}}};{({{\mathcal{J}}}\begin{array}{l}\\ 1\end{array}{{\boldsymbol{u}}})}^{T},{({{\mathcal{J}}}\begin{array}{l}\\ 2\end{array}{{\boldsymbol{u}}})}^{T},{({{\mathcal{J}}}\begin{array}{l}\\ 3\end{array}{{\boldsymbol{u}}})}^{T}]\!]\\ ={{\bf{{{\mathcal{G}}}}}}{\times }_{1}^{2}{({{\mathcal{J}}}\begin{array}{l}\\ 1\end{array}{{\boldsymbol{u}}})}^{T}{\times }_{2}^{2}{({{\mathcal{J}}}\begin{array}{l}\\ 2\end{array}{{\boldsymbol{u}}})}^{T}{\times }_{3}^{2}{({{\mathcal{J}}}\begin{array}{l}\\ 3\end{array}{{\boldsymbol{u}}})}^{T},$$

(5)

where the core tensor \({{\bf{{{\mathcal{G}}}}}}\in {{\mathbb{R}}}^{{M}_{1}\times {M}_{2}\times {M}_{3}}\) is a trainable full matrix typically smaller than the original tensor that compresses the data. Here, \({{\bf{{{\mathcal{A}}}}}}{\times }_{a}^{b}{{\bf{{{\mathcal{B}}}}}}\) denotes the tensor contraction operation between the a-th dimension of tensor \({{\bf{{{\mathcal{A}}}}}}\) and the b-th dimension of tensor \({{\bf{{{\mathcal{B}}}}}}\). The \({{\mathcal{J}}}\begin{array}{l}\\ i\end{array}{{\boldsymbol{u}}}({x}_{i})\in {{\mathbb{R}}}^{{M}_{i}\times 1}\) is one-dimensional (1D) interpolated output of ith input dimension over Mi modes represented as:

$${{\mathcal{J}}}\begin{array}{l}\\ i\end{array}{{\boldsymbol{u}}}({x}_{i}) =\left[\begin{array}{cccc}\begin{array}{l}1\\ i\end{array}{u}^{(1)}&\begin{array}{l}1\\ i\end{array}{u}^{(2)}&\cdots \,&\begin{array}{l}1\\ i\end{array}{u}^{({J}_{i})}\\ \begin{array}{l}2\\ i\end{array}{u}^{(1)}&\begin{array}{l}2\\ i\end{array}{u}^{(2)}&\cdots \,&\begin{array}{l}2\\ i\end{array}{u}^{({J}_{i})}\\ \vdots &\vdots &\vdots &\vdots \\ \begin{array}{l}{M}_{i}\\ i\end{array}{u}^{(1)}&\begin{array}{l}{M}_{i}\\ i\end{array}{u}^{(2)}&\cdots \,&\begin{array}{l}{M}_{i}\\ i\end{array}{u}^{({J}_{i})}\end{array}\right]\cdot \left[\begin{array}{c}{N}^{(1)}({x}_{i})\\ {N}^{(2)}({x}_{i})\\ \vdots \\ {N}^{({J}_{i})}({x}_{i})\end{array}\right]\\ =\begin{array}{l}\\ i\end{array}{{\boldsymbol{U}}}{\times }_{2}^{1}\begin{array}{l}\\ i\end{array}{{\mathcal{X}}}({x}_{i})=\begin{array}{l}\\ i\end{array}{{\boldsymbol{U}}}\begin{array}{l}\\ i\end{array}{{\mathcal{X}}}({x}_{i}),$$

(6)

where \(\begin{array}{l}\\ i\end{array}{{\boldsymbol{U}}}\in {{\mathbb{R}}}^{{M}_{i}\times {J}_{i}}\) and \(\begin{array}{l}\\ i\end{array}{{\bf{{{\mathcal{X}}}}}}(x)\in {{\mathbb{R}}}^{{J}_{i}}\). As illustrated in the special case box of Fig. 1, the message passing (blue arrow) only happens in the axial directions, yielding 1D interpolation functions: \({N}^{({J}_{i})}({x}_{i})\).

When there is more than one output (L > 1), the interpolated field becomes a vector of L elements:

$${{\mathcal{J}}}{{\boldsymbol{u}}}({{\boldsymbol{x}}}) =\left[{{\mathcal{J}}}{u}_{1}({{\boldsymbol{x}}}),{{\mathcal{J}}}{u}_{2}({{\boldsymbol{x}}}),\cdots {{\mathcal{J}}}{u}_{L}({{\boldsymbol{x}}})\right],\,{\mbox{where}}\,\\ {{\mathcal{J}}}{u}_{l}({{\boldsymbol{x}}}) =[\![{{{\bf{{{\mathcal{G}}}}}}}_{l};{({{\mathcal{J}}}\begin{array}{l}\\ 1\end{array}{{{\boldsymbol{u}}}}_{l})}^{T},{({{\mathcal{J}}}\begin{array}{l}\\ 2\end{array}{{{\boldsymbol{u}}}}_{l})}^{T},{({{\mathcal{J}}}\begin{array}{l}\\ 3\end{array}{{{\boldsymbol{u}}}}_{l})}^{T}]\!]\\ ={{{\bf{{{\mathcal{G}}}}}}}_{l}{\times }_{1}^{2}{({{\mathcal{J}}}\begin{array}{l}\\ 1\end{array}{{{\boldsymbol{u}}}}_{l})}^{T}{\times }_{2}^{2}{({{\mathcal{J}}}\begin{array}{l}\\ 2\end{array}{{{\boldsymbol{u}}}}_{l})}^{T}{\times }_{3}^{2}{({{\mathcal{J}}}\begin{array}{l}\\ 3\end{array}{{{\boldsymbol{u}}}}_{l})}^{T},$$

(7)

where \({{\mathcal{J}}}\begin{array}{l}\\ i\end{array}{{{\boldsymbol{u}}}}_{l}\in {{\mathbb{R}}}^{{M}_{i}\times 1}\). The trainable parameters are the core tensors \({{{\mathcal{G}}}}_{l}\in {{\mathbb{R}}}^{{M}_{1}\times {M}_{2}\times {M}_{3}},l=1,\ldots,L\), and the nodal values \(\begin{array}{l}\\ i\end{array}{{{\boldsymbol{U}}}}_{l}\in {{\mathbb{R}}}^{{M}_{i}\times {J}_{i}},l=1,\ldots,L\), yielding a total count of \(L({\prod }_{i}^{I}{M}_{i}+\mathop{\sum }_{i}^{I}{M}_{i}{J}_{i})\) that scales linearly with the nodal discretization Ji, the number of modes M, and the number of outputs L.

Tucker decomposition can be further simplified to CANDECOMP/PARAFAC (CP) decomposition38,39,40 by setting the core tensor \({{\bf{{{\mathcal{G}}}}}}\) as an order-I super diagonal tensor: \({{\bf{{{\mathcal{G}}}}}}\in {{\mathbb{R}}}^{{M}^{I}}\), M = M1 = ⋯ = MI, all zero entries except the diagonal elements. If we further set the diagonal elements of \({{\bf{{{\mathcal{G}}}}}}\) to be 1, the Tucker decomposition in Eq. (7) becomes:

$${{\mathcal{J}}}{{\boldsymbol{u}}}({{\boldsymbol{x}}})={\sum }_{m=1}^{M}\left[{{\mathcal{J}}}\begin{array}{l}m\\ 1\end{array}{{\boldsymbol{u}}}({x}_{1})\odot \cdots \odot {{\mathcal{J}}}\begin{array}{l}m\\ I\end{array}{{\boldsymbol{u}}}({x}_{I})\right],$$

(8)

where \({{\mathcal{J}}}\begin{array}{l}m\\ i\end{array}{{\boldsymbol{u}}}({x}_{i})\in {{\mathbb{R}}}^{L}\) and ⊙ represents multiplication in elements. In CP decomposition, the core tensor is no longer trainable; thus, the total trainable parameter becomes \(ML{\sum }_{i}^{I}\,{J}_{i}\). If the 1D interpolated fields \({{\mathcal{J}}}\begin{array}{l}m\\ i\end{array}{{\boldsymbol{u}}}({x}_{i})\) are constructed with Q = 1 message passing, Eq. (8) degenerates to the HiDeNN-TD19. The Q = 2 nonlinear INN approximation fields are called C-HiDeNN-TD20.

It is important to note that both Tucker and CP decomposition replace a high-dimensional interpolation with one-dimensional interpolations that dramatically reduce the number of trainable parameters (or DoFs). As given in Table 1, the number of trainable parameters of the full interpolation scales exponentially with the input dimension I, whereas the Tucker and CP decomposition scales linearly with I. Considering the number of trainable parameters of multi-layer perceptrons (MLPs) scales quadratically with the number of hidden neurons, while that of INNs always scales linearly with the mode M, input I, output L, and discretization J, INNs with TD may dramatically reduce the model complexity and computing requirements. Therefore, INNs can be a sustainable alternative to traditional AI models.

However, the reconstructed basis using TD loses expressibility because of the separated variables. There is no cross-term in the functional space of TD. Nevertheless, we can transform this into an advantage by enriching the 1D approximations with adaptive activation (see SI Section 2.2) for the Q-step message passing. Therefore, we can find a hybrid architecture that preserves the advantages of numerical methods (i.e., FEM) and neural networks while acknowledging that it loses something from both.

Relevance to GNNs

GNNs have been widely applied to various machine learning tasks involving graph-structured data41,42. These tasks are typically categorized into three levels: node-level, edge-level, and graph-level learning. INNs, on the other hand, leverage the graph structure to construct a network architecture, but the graph nodes do not have to conform to the data structure. They are arbitrary interpolation points in the input domain. Furthermore, an INN generally performs graph-level predictions; given an input x, it predicts an output u by summing the product of interpolation functions and interpolation values (see Eq. (4)). In SI Section 2.7, we present a benchmark involving the training of 3D FEA simulation results and compare the problem formulations and training outcomes across MLP, GNN, and INN models.

Relevance to PGD

INNs with CP TD have remarkable similarity with the proper generalized decomposition (PGD), which will be elucidated in two different aspects: function approximation and solution schemes.

PGDs can utilize any functional space that admits the CP decomposition form (see Eq. (8)). Thus, an INN employing CP decomposition becomes functionally equivalent to the PGD. However, the INN framework was developed from a broader perspective: starting from general, non-separable function approximations and later incorporating structured decompositions such as Tucker and CP decomposition to effectively handle input domains discretized on regular grids. From the function approximation standpoint, the PGD can therefore be viewed as a subset of the INN.

In terms of the solution scheme, we further divide our discussion into the PDE solver and the data-driven trainer. For PDE solving, the general rule of PGD is to find solutions mode by mode and in a decomposed space (1D or low-dimensional space) for each mode. This is often referred to as subspace iteration43. The INN solver, on the other hand, generally solves for all modes and dimensions at once. Detailed solution scheme of the INN solver can be found in SI Section 4.2 and44.

Similarly, the general rule of an INN trainer is to optimize the entire trainable parameters simultaneously, akin to how weights and biases are jointly updated in MLPs during backpropagation. However, alternative optimization strategies are certainly possible. For example, one could train the INN sequentially by mode or by input dimension (similar to the way the PGD solves a PDE), or optimize a set of modes together, followed by optimizing another set of modes. Exploring such customized training schemes is a promising direction for future work.

Benchmarking INN trainer

To highlight INN’s advantages in speed (epoch at convergence) and storage (number of parameters) over MLP, we introduce a benchmark problem: a 10-input 5-output physical function45, (see SI Section 2.4 for details). Using this equation, we randomly generate 100,000 data points using a Latin hypercube sampling. The dataset is split into 80% and 20% for training and testing, respectively. MLPs with two and three hidden layers and with a sigmoid activation are tested, while INNs with CP decomposition, two (Q = 2) message passing, and s = 2, P = 2 polynomial activation are adopted. To investigate the convergence behavior depending on the number of parameters, we set the stopping criteria as training mean squared error (MSE): 4e-4 and counted the epoch at convergence. Since the physical function is deterministic (no noise) and we drew a sufficiently large number of data, no considerable overfitting was observed.

Figure 4a reveals that given the same number of trainable parameters, INNs converge significantly faster than MLPs. For instance, the INN (4 seg., 10 modes) with 2500 parameters converged at the 19th epoch, while the MLP (2 layers, 40 neurons) with 2285 parameters converged at the 253th epoch. MLPs with more parameters tend to stop at earlier epochs, however, even the largest MLP (3 layers, 100 neurons) with 21,805 parameters converged at the 45th epoch, while that of INN (10 seg., 14 modes) with only 7700 parameters converges at the 14th epoch. Notice that all benchmarks were conducted using NVIDIA RTX A6000 GPU with 48GB VRAM, and the training time per epoch for INN and MLP was indistinguishable (around 1 second per epoch). This benchmark demonstrates that the INN trainer is lightweight and fast-converging compared to traditional MLPs, underlining the sustainability and scalability of INNs. Other benchmarks of the INN trainer are elucidated in SI Section 2: SI Section 2.2: Adaptive INN activation function, SI Section 2.3: Training one-input two-output function, SI Section 2.5: Four-input one output battery manufacturing data, SI Section 2.6: Spiral classification, and SI Section 2.7: Learning FEA simulation results. We highlight SI Section 2.2, where the INN interpolation functions are updated during training to enhance model accuracy. This demonstrates INN’s capability of adaptive activation function, a widely accepted concept in AI and the scientific machine learning literature46,47. SI Sections 2.3, 2.5, and 2.6 demonstrate INN’s generalizability for unseen data and fast convergence. An in-depth discussion on the choice of INN hyperparameters is provided in SI Section 2.10. To reproduce the benchmarks, readers may refer to the document “Instructions for benchmark reproduction.pdf” or the tutorial directory of the GitHub repository https://github.com/hachanook/pyinn.

a A standard regression problem is studied. The training stops when the training loss hits the stopping criteria (MSE:4e-4). We set the same optimization condition: ADAM optimizer; learning rate: 1e-3; batch size: 128. The number of neurons per hidden layer is denoted for each MLP data point, while the number of segments and modes are denoted for each INN data point. MLP used the sigmoid activation function, while INN with CP decomposition adopted Q = 2, s = 2, P = 2. b A 1D Poisson’s equation is solved with PINN and INN. PINN is made of a 1-layer MLP with a varying number of neurons. Randomly selected 10k collocation points are used to compute the PDE loss. With a batch size of 128, 2000 epochs are conducted using an ADAM optimizer with a learning rate of 1e-1. INN Q = 1, s = 1, P = 1 with the galerkin formulation is equivalent to FEM with linear elements. All solvers and trainers are graphics processing units (GPU) optimized with the JAX library65.

Benchmarking INN solver

INNs can achieve well-known theoretical convergence rates when solving a PDE7. In numerical analysis, the convergence rate is the rate at which an error measurement decays as the mesh refines. This benchmark solves a 1D Poisson’s equation defined in the Method Section with the H1 norm error estimator. It is mathematically proven that a numerical solution of FEM or any interpolation-based solution method exhibits a convergence rate of P, which is the complete order of the polynomial basis used in the interpolation20.

Figure 4b is the H1 error vs. trainable parameters (i.e., degrees of freedom or DoFs) plot, where the convergence rates are denoted as the slope of the log–log plot.

INNs with different hyperparameters are studied. As expected from the convergence theory, the H1 error of INNs with different P converges at a rate of P. On the other hand, PINNs constructed with MLP do not reveal a convergent behavior. Only a few theoretical works have proved the convergence rate of PINNs under specific conditions48. A PDE solver needs to have a stable and predictable convergence rate because it guides an engineer in choosing the mesh resolution and other hyperparameters for achieving the desired level of accuracy. INN solvers meet this requirement by choosing the right hyperparameters for the message passing (i.e., construction of the interpolation function). We also demonstrate the convergent behavior of INN solvers with and without CP decomposition for a 3D linear elasticity equation in SI Sections 4.5 and 4.6.

link