Macroscopic indicators for information flow

Let G be a network of N nodes and ∣E∣ connections, respectively. The connections are often encoded by the adjacency matrix A, where Aij = 1 if nodes i and j are connected, and Aij = 0 otherwise. For a weighted network, the values Aij can be non-binary. Information exchange between the components happens through the connections.

We focus on diffusion as one of the simplest and most versatile processes to proxy the flow of information59 governed by the graph Laplacian matrix L = D − A, where D is a diagonal matrix, with Dii = ki being the connectivity of node i. Consequently, we write the diffusion equation and its solution:

$${\partial }_{\tau }{\psi }_{\tau }=-{{{\bf{L}}}}{\psi }_{\tau },\\ {\psi }_{\tau }={e}^{-\tau {{{\bf{L}}}}}{\psi }_{0},$$

(1)

where ψτ is a concentration vector, describing the distribution of a quantity over nodes and e−τL gives the time-evolution operator, with \({\left.{e}^{-\tau {{{\bf{L}}}}}\right]}_{ij}\) giving the flow from node j to i after τ time steps.

The network density matrix can be defined as

$${{{{\boldsymbol{\rho }}}}}_{\tau }=\frac{{e}^{-\tau {{{\bf{L}}}}}}{{Z}_{\tau }},$$

(2)

where \({Z}_{\tau }={{{\rm{Tr}}}}\,({e}^{-\tau {{{\bf{L}}}}})\) is the network counterpart of the partition function. In fact, Eq. (2) describes a superposition of an ensemble of operators (the outer product of eigenvectors of the Laplacian matrix) that guide the flow of information in the system53,60, with applications ranging from classification of healthy and disease states39,61 and robustness analysis62,63 to dimensionality reduction of multilayer networks56 and renormalization group52.

More importantly, the network Von Neumann entropy defined by \({{{{\mathcal{S}}}}}_{\tau }=-{{{\rm{Tr}}}}\,({{{{\boldsymbol{\rho }}}}}_{\tau }\log {{{{\boldsymbol{\rho }}}}}_{\tau })\) has been studied as a measure of how diverse the system responds to perturbations53,54, and the network free energy, \({F}_{\tau }=-\log {Z}_{\tau }/\tau\), as a measure of how fast the signal transport between the nodes55,56.

Since the network density matrix is Gibbsian (Eq. (2)) and the network internal free energy can be defined as \({U}_{\tau }={{{\rm{Tr}}}}({{{\bf{L}}}}{{{{\boldsymbol{\rho }}}}}_{\tau })\), we can write the network free and internal energy and Von Neumann entropy in terms of Zτ and its derivative:

$${F}_{\tau }=-\!\log {Z}_{\tau }/\tau$$

(3)

$${U}_{\tau }=-\!{\partial }_{\tau }\log {Z}_{\tau }$$

(4)

$${S}_{\tau }=\tau ({U}_{\tau }-{F}_{\tau }),$$

(5)

exactly like in equilibrium thermodynamics. It is noteworthy that having a compressed network with a compressed Zτ matching the one of the original network mathematically guarantees identical global information dynamics, measured in terms of macroscopic physics indicators such as network entropy and free energy. We consider a compression where the system changes size from N \({N}^{{\prime} }\), leading to a new Laplacian \(({L}^{{\prime} })\) and a new partition function \(({Z}^{{\prime} })\). Here, we aim to reach a compression that follows:

$$Z=\gamma {Z}^{{\prime} },$$

(6)

regardless of τ, with minimum error.

In fact, if the two networks have different sizes, the only valid value for the multiplier is \(\gamma=N/{N}^{{\prime} }\). A simple argument to support this claim is that at τ = 0 it can be shown that Z = N and \({Z}^{{\prime} }={N}^{{\prime} }\), regardless of the network topology. Therefore, the only solution not rejected by the counter example of τ = 0, which gives the maximum value for the partition function, is \(\gamma=N/{N}^{{\prime} }\). If \(\gamma=N/{N}^{{\prime} }\) with N and \({N}^{{\prime} }\) being the sizes of the original and the reduced systems, these transformations can be interpreted as size adjustments.

We show that such a compression simultaneously preserves other important functions, like entropy and free energy, after size adjustment. For instance, the system’s entropy has its maximum \(S=\log N\) at τ = 0, which can be transformed into the compressed entropy with the subtraction of the factor \(\log \gamma\) as size adjustment \(S\to S-\log \gamma\). Similarly, for free energy \(F=-\log Z/\tau\), the size required adjustment reads \(-\log Z/\tau \to -\log Z/\tau+\log \gamma /\tau\).

The partition function transformation we discussed earlier (Z → γZ, and therefore \({Z}^{{\prime} }=Z/\gamma\)), automatically guarantees both transformations:

$${S}^{{\prime} } =-\tau {\partial }_{\tau }\log {Z}^{{\prime} }-\tau {F}^{{\prime} }\\ =-\tau {\partial }_{\tau }\log {Z}^{{\prime} }+\tau \log {Z}^{{\prime} }\\ =-\tau {\partial }_{\tau }[\log Z-\log \gamma ]+\log Z-\log \gamma \\ =S-\log \gamma,$$

(7)

$${F}^{{\prime} }=-\frac{\log {Z}^{{\prime} }}{\tau }=-\frac{\log Z/\gamma }{\tau }=F+\frac{\log \gamma }{\tau }.$$

(8)

Also, since the von Neumann entropy of the coarse-grained system is given by

$${S}^{{\prime} }=\tau {U}^{{\prime} }+\log {Z}^{{\prime} },$$

(9)

the transformation of the internal energy can be obtained as:

$$S+\log \gamma =\tau {U}^{{\prime} }+\log \gamma+\log Z,\\ \tau U+\log Z =\tau {U}^{{\prime} }+\log Z,\\ U ={U}^{{\prime} },$$

(10)

In the following section, we use machine learning to find compressions replicating the original entropy and free energy curves by approximating \({Z}^{{\prime} }=Z/\gamma\), for all network types across all values of τ.

Machine learning model

Graph neural networks are a class of models suited for machine learning tasks on graphs that leverage node features as structural information64,65. Based on the fundamental idea of aggregating and updating node information with learnable functions, graph neural networks have spawned numerous variants such as graph convolutional networks66, graph attention networks67, graph isomorphism networks68, and graph Transformers69. They have been widely applied to problems such as node classification70, structural inference71, generative tasks72, and combinatorial optimization tasks73 on graphs.

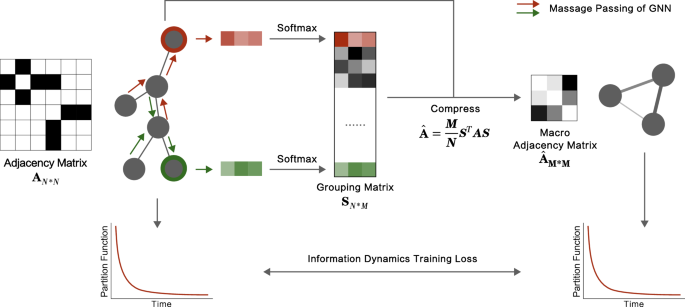

Our approach to solving the network compression problem using graph neural networks is illustrated in Fig. 1. Here, we employ graph isomorphism networks to aggregate node features and decide on the grouping of nodes. Nodes within the same group are compressed into a supernode. Also, the sum of their connections becomes a weighted self-loop of the supernode. Of course, the inter-group connections shape the weighted links between different supernodes. To assess how effective a coarse-graining is, we compare the Mean Absolute Error (MAE) of the normalized partition functions, \(\frac{Z}{N}\), where N is the number of nodes in the original network or the compressed one. Therefore, MAE is the loss function that we use to train the graph neural network. Note that, through the optimization, the model continuously adjusts the mapping from node local structural features to node groupings, using gradient descent. This adjustment ensures that the resulting macroscopic network has a partition function resembling the original network.

Here we mention a number of suitable properties of our model. First, since every edge in the original network becomes a part of a weighted edge in the macroscopic network, the connectedness of the network is guaranteed. If the original network is connected, the macroscopic network will also be connected. Second, the number of nodes in the macroscopic network can be flexibly configured as a hyperparameter that can be tune to satisfy different tasks. Lastly, as we show in the next sections, the time complexity of our model is O(N + E), where N and E are the number of nodes and edges in the original network, respectively. This is a considerable edge over other models that often perform at O(N3)— including box-covering, laplacian RG, or O(N2)— for instance, geometric RG. For this reason, we are able to compress very large networks. Please see the supplementary material for a more detailed analysis of time complexity (Supplementary Note 7) and experiment on network compression with 100,000 nodes (Supplementary Fig. 3).

How our method differs from Laplacian Renormalization Group (LRG)

Since both methods use network density matrices, it is necessary to discuss their differences. The LRG was among the first to introduce Kadanoff’s decimation based on diffusion dynamics on networks as the core of the renormalization process. It has successfully pushed the boundaries of RG to include structural heterogeneity, a feature widely observed in complex systems. To do so, LRG focuses on the specific heat at the specific scale of phase transition to define their information cores52,60 and use them to perform renormalization.

It is crucial to note that the difference between LRG and our method is not limited to the machine learning implementation. Instead, the fundamental difference is in the theoretical conceptualization of the RG problem. We base our method on the fact that the partition function contains the full information of the macroscopic descriptors of the flow, including network entropy and free energy— as demonstrated in the previous sections. Therefore, by trying to replicate the full partition function profile at all propagation scales (all values of τ) we replicate the macroscopic features like the diversity of diffusion pathways and the flow speed in reaching distant parts of the network.

It is worth mentioning that LRG can be used to renormalize at any particular scale (τ). However, they discard the fast modes of diffusion processes (corresponding to eigenvalues greater than \(\frac{1}{\tau }\)). Instead, our method preserves the complete distribution of eigenvalues at all scales simultaneously by maintaining the full behavior of the partition function

Finally, in addition to the discussed theoretical arguments, we use various numerical evidence to demonstrate that our domain of applicability is much larger than LRG’s. As mentioned before, LRG is designed for heterogeneous networks like Barabasi-Albert (BA) and scale-free trees. However, the topology of real-world networks is not limited to what the BA model can generate. The two decades of network science have revealed that real-world networks also exhibit important features such as segregation while integration and order while disorder— i.e., modules, motifs, etc. That is why the compression methods must eventually go beyond the current limitations and model-specific assumptions.

In the following sections, we compare the performance of NFC Model and other widely used methods, including LRG, on both synthetic and empirical networks. Please see the supplementary Note 3 for a more detailed comparison with LRG on different datasets regarding the preservation of information flow (Supplementary Fig. 5) and entropy (Supplementary Fig. 7), the retention of eigenvalue distributions (Supplementary Fig. 6), and differences in supernode composition (Supplementary Fig. 8) and macroscopic network structures (Supplementary Fig. 9)— which comparison clearly shows a large difference between our method and LRG, both in the performance and the way they group nodes together.

Synthetic networks compression

Here, we use our model to compress four synthetic networks: two Stochastic Block Model (SBM) with mixing parameters (μ) of 0.029 and 0.127, and two Barabási–Albert (BA) models with parameters m = 1, 2, all with 256 nodes (See Fig. 2).

As expected, for very large compressions, we find an increase in the deviation between partition functions— i.e., cost. However, interestingly, the compression error exhibits an approximately scale-free relationship with network size, meaning that no compression size is special. We visualize the compressed network with 35 nodes in the second row of subfigures in Fig. 2, where the nodes in the compressed network and the corresponding nodes in the original network were assigned the same color.

We show that our approach outperforms others including Box-Covering, Geometric Renormalization, and Laplacian Renormalization. Also, our results indicate that the mesoscale structure of SBM and the presence of hubs in BA play important roles in the topology of the resulting compressed network. The nodes belonging to the same community in the original SBM networks often group together to form a supernode in the macro-network. More surprisingly, in the BA network, nodes with a similar number of connections were typically coarse-grained together.

This finding suggests that regardless of being directly connected or not, nodes with similar local structures need to group together when the goal is recovering the flow of information. Here, the coarse-graining of nodes with similar local structures is learned by the model automatically and was not predetermined a priori.

Empirical networks compression

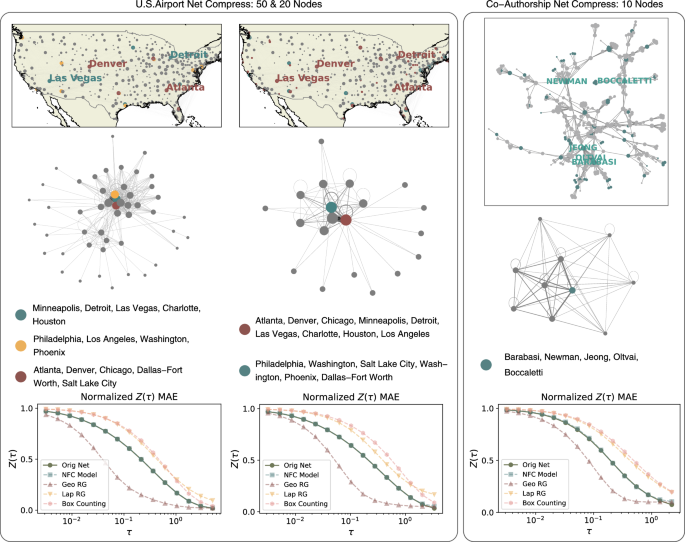

We analyze two representative empirical networks to test the capability of our method. The networks include the U.S. Airports, taken from Open Flights and Netzschleuder, and the Scientists Co-Authorship Network used in 200674.

In the U.S. Airports Network, nodes and edges represent airports and flight routes between them— i.e., a binary and undirected edge connects two airports if there is at least one flight between them. Similarly, in the co-authorship network, nodes are individual researchers, and an edge exists between two scientists if they have co-authored at least one paper. (For more details on the datasets, see Table 1).

Firstly, we reduce the US airport network up to 50 and 20 supernodes compression sizes (See Fig. 3). We highlight the 15 airports with the highest connectivity, located in the cities of Atlanta, Denver, Chicago, Dallas-Fort Worth, Minneapolis, Detroit, Las Vegas, Charlotte, Houston, Philadelphia, Los Angeles, Washington, Salt Lake City, and Phoenix. Interestingly, these 15 airports group together to form only a few supernodes, owing to their similar local structures— i.e., degree, in this case— in both compressions. Furthermore, in the 50-node macro-network, cities like Atlanta and Denver are compressed into one supernode, and cities such as Detroit and Las Vegas shape another supernode. Whereas, in the compression with 20 supernodes, cities including Atlanta, Denver, Detroit and Las Vegas join into one single supernode.

The U.S. Airport Network and the Co-Authorship network of scientists (2006) alongside the corresponding compression outcomes under different macro node configurations. We further illustrate the macro nodes associated with the top 15 airports and top 5 scientists as determined by node degree, some of the top nodes are marked in the original network. It becomes apparent that these most emblematic nodes primarily cluster within a limited number of macro nodes, supporting the hypothesis that nodes with similar local structures gravitate towards similar groups. As the macro airport network shrinks, these nodes amalgamate into fewer macro nodes, suggesting that the diminishing expressivity of the macro network necessitates the model to overlook the distinctions between these nodes. The three subsequent subgraphs delineate the Mean Absolute Error (MAE) of the normalized partition functions in the compression results derived from our method against other competitive approaches. It is worth noting that, due to the inability of some comparative methods (such as Box-Covering) to precisely control the size of the network after compression, we allow them to compress a network larger than the target size and compare it with our method.

The compression of the network scientist system confirms the previous results (See Fig. 3). For instance, the five highest-degree researchers of this dataset, including Barabasi, Jeong, Newman, Oltvai, and Boccaletti, form one supernode, when the size of the macro-network is 10. Note that they are not a tightly connected group in the original network. In fact, Newman, Barabasi, and Boccaletti are not even connected in this network. This further suggests that having similar local structures in the network is a key factor in compression.

Finally, we compare the partition function deviation achieved by different compression methods and show that our approach clearly outperforms others (See Fig. 3), in the case of both empirical systems. For more detailed information on the groupings of all airports, please see Supplementary Table 1.

Overall, confirming the synthetic networks analysis, our approach generates macro networks closely resembling the information flow in the original networks by coarse-graining nodes of similar local structures.

Interpretability of the compression procedure

In experiments with both synthetic and real-world networks, we found that our machine-learning model merges nodes with similar local structures to preserve information flow. Here, we attempt to provide an explanation of how exactly it works.

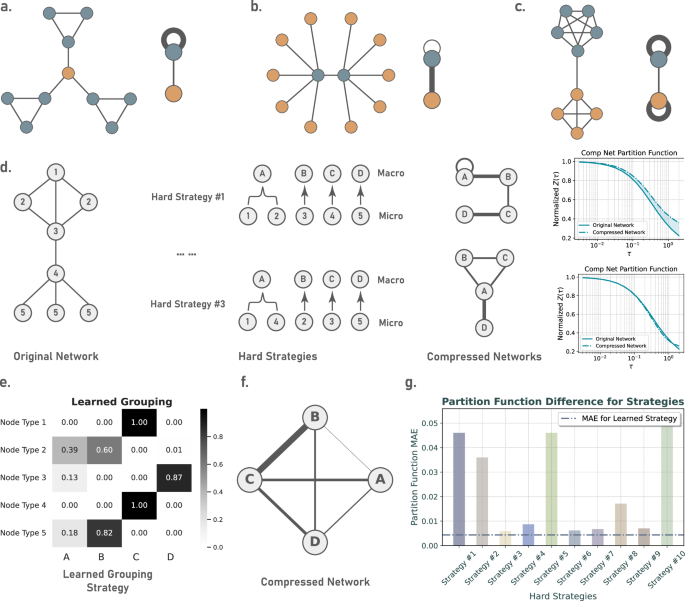

Firstly, clustering nodes with similar local structures together preserves the function of that local structure within the original network while reducing structural redundancy. This can be intuitively understood through the analysis of several typical topological features.

High clustering coefficient nodes

Nodes with a high clustering coefficient often have neighbors that are interconnected, acting to trap information locally during the diffusion of information in the network. Grouping nodes with a high clustering coefficient together, their numerous interconnections will become a high-weight self-loop on the supernode, similarly playing a role in trapping information locally, as shown in Fig. 4, subplot a.

a Illustration of the compression of nodes with high clustering coefficients. Both the high-clustering nodes and their corresponding macro-nodes tend to trap information locally. b Demonstration of the compression of hub nodes. Both the hub nodes and their corresponding macro-nodes act as bridges for global information diffusion. c Compression of community structures: Nodes within the same community are compressed into a supernode, which, similar to the original community structure, tends to trap information locally. d We show the macroscopic network after compression using different hard(discrete) grouping strategies for a toy network with 8 nodes and 5 types of different local structures, and the changes in the corresponding partition function. e The soft(continuous) grouping strategy learned by our model. In (f) we present the macroscopic network structure given by the grouping strategy from (e). g Comparison of the normalized partition function error before and after compression under different grouping strategies.

Hubs

Hub nodes act as bridges for global information diffusion within the network. By clustering hub nodes together, the edges between hub nodes and other nodes transform into connections between supernodes in the compressed network, still ensuring the smooth passage of global information. Suppose we group a hub node and its neighbors into one; then, their interconnections become self-loop of that supernode that trap the information locally, acting as a barrier to global information transmission, as shown in Fig. 4, subplot b.

Strong communities

Networks with distinct community structures experience slow global information diffusion (due to few inter-community edges). Grouping nodes of the same community together transforms the numerous intra-community edges into self-loops on the supernodes, trapping information locally. Meanwhile, the few inter-community edges become low-weight edges between supernodes, similarly serving to slow down the speed of global information diffusion as shown in Fig. 4, subplot c. Through these three typical examples, we find that structural redundancy has been reduced while the corresponding functions are preserved. Therefore, this approach naturally benefits the preservation of information flow when compressing networks.

Then, based on the principle that “nodes with similar local structures are grouped together,” the graph neural network still needs to optimize the specific grouping strategy. This is mainly because, on one hand, the number of groups is often far less than the number of types of nodes with different local structures (imagine a network with 3 different types of nodes, whereas we need to compress it into a macro network with 2 nodes). On the other hand, our model produces continuous grouping results, for example, a certain type of node might enter group A with an 80% ratio and group B with a 20% ratio. Each different ratio represents a new grouping strategy, and we need to find the most effective one from infinitely many continuous grouping strategies, which is an optimization problem, hence requiring the aid of a machine learning model. Subplots d − f of Fig. 4 show discrete grouping strategies and the learned continuous grouping strategy for compressing a network with 5 types of nodes into a macroscopic network with 4 supernodes.

From Fig. 4, we can see that different discrete grouping strategies produce different macroscopic network structures (subplot d), our learned continuous grouping strategy (subplot e), the macroscopic network structure (subplot f), and the partition function difference corresponding to different grouping strategies (subplot g). It is evident from the figure that the error in preserving information flow with our learned continuous grouping strategy is lower than that of all discrete grouping strategies. In summary, this is how our model works: based on the fundamental useful idea that “nodes with similar local structures enter the same group,” our model uses the gradient descent algorithm to select one of the infinitely many solutions (continuous grouping strategies) that results in a lower error of the partition function.

Compressing networks from different domains

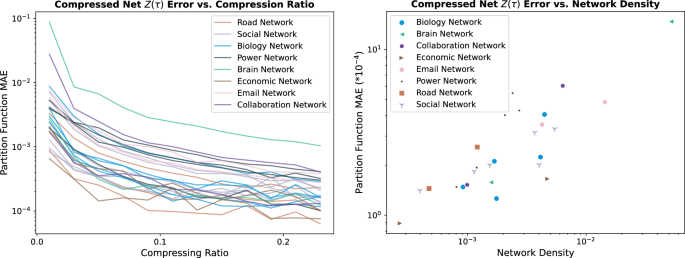

To further explore the applicable fields of our model, we conducted further analysis on various real networks and attempt to understand the differences in compression outcomes across different domains. In our upcoming experiments, we have selected 25 networks from 8 different fields: biological networks, economic networks, brain networks, collaboration networks, email networks, power networks, road networks, and social networks. Their node number ranges from 242 to 5908, and the number of edges ranges from 906 to 41,706. See Supplementary Table 2 for more information of these networks.

We compressed all networks at various scales, with compression ratios ranging from 1% and 2% up to 25% of the original network size. We aimed to explore how the effectiveness of compression varies with the compression ratio across networks from different domains. The results are shown in the left subplot of Fig. 5. First, we observed that in most of the cases, the error of the normalized partition function (measured by Mean Absolute Error) is less than 10−2, indicating that our model performs well across networks from different domains. Moreover, regarding the differences between networks from various domains, we found that the density of the network has a major impact on compression efficiency. As shown in the right subplot of Fig. 5, we plotted the density against the error in the partition function before and after compression for all networks, and observed a positive correlation(correlation coefficient of 0.856): a network with lower density tends to be more easily compressed. It is plausible that this happens because an increase in the number of connections makes the pathways for information transmission more complex, thereby increasing the difficulty of compression.

In the left subplot, we show the pre- and post-compression partition function error (measured by MAE) for 25 networks from 8 different domains at various compression ratios, ranging from 1% to 25%. In the right subplot, we display the relationship between the average compression error and the network’s density when the compression ratio is greater than 0.1 (at this point, the compression error is not quite sensitive to the compression size, which can be considered as the best compression error achievable by the model without being limited by size).

One compression model for many real-world networks

In previous sections, a separate GNN was trained to learn a compression scheme tailored to a specific network. Despite the satisfactory compression results, learning a new neural network for each dataset can be computationally costly. Therefore, we generalize the previous model and train it on a large set of empirical networks. We show that this generalization can compress new networks, the ones it hasn’t been trained on, without any significant learning cost.

First, we gather a dataset with 2, 193 real-world networks spanning various domains, from brains and biological to social and more, with the number of nodes ranging from 100 to 2, 495, from Network Repository (NR)75 (for more information about the dataset, training, and testing process, please see Supplementary Note 6). We randomly select the networks with size smaller than 1000 as the training set and keep the remaining networks as the test set. We apply one model to numerous networks, compress them to macro-networks, and calculate the loss in terms of partition function deviation. The results demonstrate highly effective learning that can successfully compress the test set, generating macro-networks that preserve the flow of information.

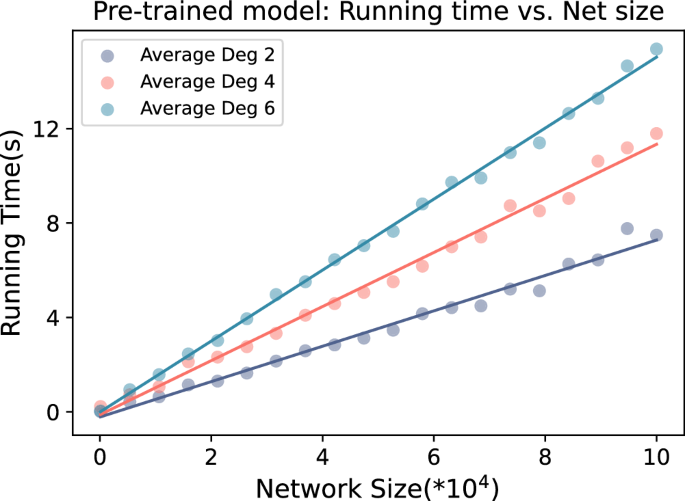

This pre-trained model directly possesses the capability to compress networks from different domains and sizes, and it reduces the computational complexity by orders of magnitude, outperforming existing methods. More technically, the time complexity of our model is O(N + E), where N is the system’s size and E the number of connections – which overall drops to O(n) for sparse networks – while the time complexity of other compression models often reaches O(N3) (box-covering, laplacian RG) or O(N2)(geometric RG). To better quantify the model’s time complexity, we validated it on a dataset with a much wider range of sizes. The results are shown in Fig. 6.

In this figure, we show the time required for a pre-trained model to run on W-S networks with different numbers of nodes and connections. The X-axis represents the number of nodes, and the Y-axis shows the time needed to compress the network.

link