VO2-based oscillators have been utilized as artificial neurons in many previous studies7,24,26,27,28,29,30, each featuring slightly different designs, mechanisms, and applications. In particular, we focus on thermal neuristors, a concept pioneered in ref. 7, which effectively reproduces the behavior of biological neurons. These neuristors are not only straightforward to manufacture experimentally but also exhibit advantageous properties such as rapid response times and low energy consumption.

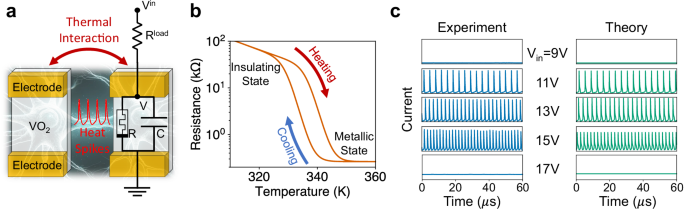

Figure 1a presents the design and circuitry of the thermal neuristor, featuring a thin VO2 film connected in series to a variable load resistor. VO2 undergoes an insulator-to-metal transition (IMT) at approximately 340 K31, with different resistance-temperature heating and cooling paths, which leads to a hysteresis loop, as depicted in Fig. 1b. Additionally, the system includes a parasitic capacitance resulting from the cable connections, which is vital for the neuristor’s operation.

a Schematic and circuit diagram of two neighboring thermal neuristors. Each neuristor is modeled as an RC circuit, which undergoes stable spiking oscillations with proper external input. Neighboring neuristors are electrically isolated but communicate with each other through thermal interactions. b The resistance-temperature characteristic of the VO2 film, denoted by the variable resistor R in (a). VO2 exhibits an insulator-to-metal transition at approximately 340 K, characterized by distinct heating and cooling trajectories, thus forming a hysteresis loop. c Illustration of stable spiking oscillations in a single neuristor across various input voltages, with the y-axis range for each plot set between 0 and 5 mA. Numerical simulations based on Eqs. (1) and (2) align well with experimental data, demonstrating stable spiking patterns within a certain input voltage range and an increase in spiking frequency proportional to the input voltage. Source data are provided in the Source Data file.

The behavior of the circuit displayed in Fig. 1a closely resembles a leaky integrate-and-fire neuron32. The capacitor C is charged up by the voltage source, Vin, and slowly leaks current through R. When the voltage across VO2 reaches a threshold, joule heating initiates the IMT, drastically reducing resistance in the VO2 which causes C to discharge, leading to a current spike. At the same time, the reduced resistance leads to reduced joule heating, which is then insufficient to maintain the metallic state, causing the VO2 film to revert to its insulating phase. This process repeats, producing consistent spiking oscillations.

We have experimentally fabricated and evaluated this system of VO2-based thermal neuristors. The spiking behavior of a single neuristor is shown in Fig. 1c. With insufficient heating, the neuristor does not switch from the insulating state whereas excessive heating keeps it perpetually in the metallic state. As a consequence, no spiking patterns emerge when the input voltage is too low or too high. Numerical simulations, using the model described in the next section, corroborate this behavior, mirroring the experimental findings.

Distinct from biological neurons that communicate via electrical or chemical signals, thermal neuristors interact through heat. As illustrated in Fig. 1a, adjacent neuristors, while electrically isolated, can transfer heat via the substrate. Each current spike produces a heat spike, which spreads to nearby neuristors, reducing their IMT threshold voltage, thereby causing an excitatory interaction. Conversely, excessive heat can cause neighboring neuristors to remain metallic and cease spiking, akin to inhibitory interactions between neurons. Further experimental insights on neuristor interactions are detailed in Appendix B the supplementary information (SI).

Although we have experimentally shown that a small group of thermal neuristors can mirror the properties of biological neurons, effective computations require a vast network of interacting neurons. Before building a complex system with many neuristors, we first simulate a large array of thermal neuristors, providing a blueprint for future designs.

Theoretical model

The theoretical model builds upon the framework established in ref. 7, with some minor adjustments. The system is built of identical neuristors, uniformly spaced in a regular 2-dimensional array. Their behavior is governed by the following equations:

$$C\fracdV_idt=\frac{V_i^{\rmin}}R_i^\rmload-V_i\left(\frac1R_i+\frac1{R_i^{{{\rmload}}}}\right),$$

(1)

$$C_{\rmth}\fracdT_idt=\fracV_i^2R_i-S_{\rme}(T_i-T_{\rm0})+S_{\rmc}\nabla ^2T_i+\sigma \eta _i(t).$$

(2)

Equation (1) describes the current dynamics, with each variable corresponding to those shown in Fig. 1a. Equation (2) describes the thermal dynamics, including the coupling between nearest-neighbor neuristors. Here, T0 represents the ambient temperature, Cth is the thermal capacitance of each neuristor, Se denotes the thermal conductance between each neuristor and the environment, and Sc refers to the thermal conductance between adjacent neuristors. ηi(t) represents a Gaussian white noise variable for each neuristor that satisfies \(\langle \eta _i(t)\eta _j(t^\prime )\rangle=\delta _i,j\delta (t-t^\prime )\), and σ is the noise strength. Detailed values of these constants are provided in the methods section. Ri is the resistance of the VO2 film, which depends on temperature and its internal state, or memory, following the hysteresis loop depicted in Fig. 1b. This memory factor is pivotal in determining the collective behavior of thermal neuristors. We utilize the hysteresis model formulated in ref. 33, with comprehensive details available in the methods section.

Numerical results

We used the theoretical model to simulate an L × L square lattice comprised of identical thermal neuristors, whose dynamics are governed by Eqs. (1) and (2). Different input voltages Vin produce a diverse array of oscillation patterns, as illustrated in Fig. 2. At very low (9V) or high (15V) input voltages, the system remains inactive, as found in individual neuristors. With a 12 V input voltage, synchronization develops, with nearly all neuristors spiking in unison, creating a phase of rigid states. A phase transition occurs slightly below Vin = 10 V, where clusters of correlated spikes start to form, then gradually turn into system-wide activity waves (10.4 V and 10.6 V). Another phase transition occurs slightly above Vin = 13.4 V, where the synchronized rigid oscillations start to fracture into smaller clusters until the individual spikes become uncorrelated (14 V).

In each panel, color indicates current level: white signifies no current, while shades of blue denote current spikes. The main panels show collective current-time plots for the first 1024 neuristors (concatenated from the first 16 rows), and each inset captures a specific moment in the 64 × 64 array. The system exhibits no activity at very low input voltages. As the voltage increases, a sequence of dynamic phases unfolds, including correlated clusters (10 V and 13.4 V), system-wide waves (10.4 V and 10.6 V), synchronized rigid states (12 V), and uncorrelated spikes (14 V), culminating again in inactivity at excessively high voltages. The thermal capacitance, Cth, is fixed at the experimentally estimated value. Detailed simulation parameters can be found in the methods section, and dynamic visualizations of these spiking patterns are available in Supplementary Movie 1.

Analytical understanding

The emergence of a broad range of phases and long-range correlations in our system, despite only diffusive coupling existing between neurons, is a point of significant interest. Diffusive coupling is typically associated with short-range interactions, making the discovery of long-range correlations particularly intriguing.

It is well-established that long-range correlations can emerge from local interactions in various systems such as sandpiles34, earthquake dynamics35, forest fires36, and neural activities37. These systems exhibit avalanches-cascades triggered when one unit’s threshold breach causes successive activations-manifesting as power-law distributions of event sizes, indicative of scale-free or near scale-free behaviors.

Such spontaneously emerging long-range correlations are often described under the framework of self-organized criticality34,35. However, this term may be misleading. Criticality suggests a distinct boundary, characterized by a phase above and below it, as seen in the sandpile model where an appropriately defined order parameter undergoes a second-order phase transition38,39. In contrast, systems like earthquakes, while displaying power-law behaviors, do not exhibit true scale-invariance40 and can be described as undergoing continuous phase transitions without clear critical boundaries39.

We argue that the observed LRO in our system, similar to those in systems without genuine scale-free behaviors, is induced by memory (time non-local) effects stemming from a separation of time scales: a slow external drive contrasts sharply with fast avalanche dynamics. In our system, we identified three distinct time scales: the metallic RC time (τmet = RmetC ~ 187 ns), the insulating RC time (τins = RinsC ~ 7.57 μs), and the thermal RC time (τth = RthCth = Cth/(Sc + Se) ~ 241 ns). We observe that τmet ⪅ τth ≪ τins. As the spiking and avalanche dynamics are primarily controlled by τmet and τth, and the driving dynamics by τins, our system does exhibit an approximate separation of time scales.

This separation allows us to conceptualize the slower time scale as memory, which retains long-term information about past states and remains relatively constant within the faster time scale, capable of preserving non-local temporal correlations. As a consequence, neuristors that are spatially distant are progressively coupled, resulting in long-range spatial correlations. This concept is systematically explored in a spin glass-inspired model41, and similar behavior is also observed in a class of dynamical systems with memory (memcomputing machines) used to solve combinatorial optimization problems42. In Appendix A in the SI, we provide an analytical derivation of this phenomenon using a slightly simplified version of our model.

Consequently, altering the memory strength, specifically through adjustments of the thermal time scale τth by varying Cth (the thermal capacitance of each neuristor), should result in changes to the oscillation patterns and the presence or absence of long-range correlations. Indeed, we find that by modifying Cth, we can control the rate of heat dissipation, effectively influencing the memory’s response time. Additionally, in Appendix C5 of the SI, we present another example where increasing the ambient temperature reduces the insulating RC time, thereby diminishing memory and minimizing long-range correlations.

Avalanche size distribution

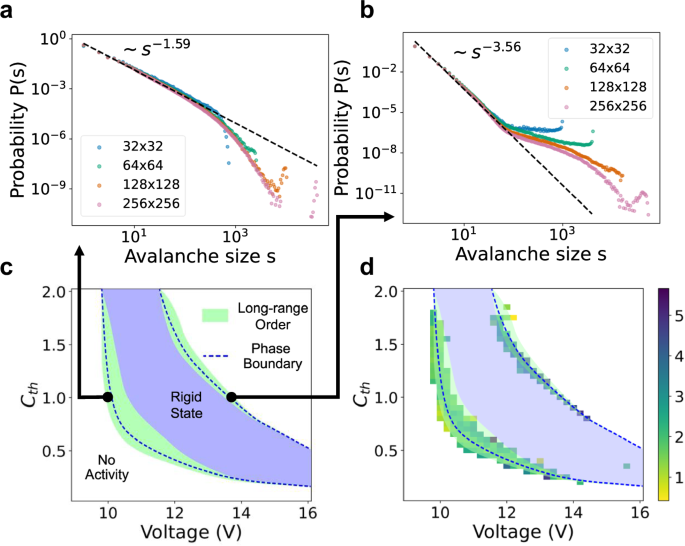

To verify the presence of LRO in our system, we analyzed the avalanche size distribution of current spikes. Here, we define an avalanche as a contiguous series of spiking events occurring in close spatial (nearest neighbor) and temporal (400 ns) proximity. The heat generated by each spiking event transfers to the neighboring neuristors, making their IMT more likely and thus triggering a cascade of spikes. Figure 3a and b shows examples of avalanche size distributions in which a power-law distribution is observed, indicative of LRO. The methodology for identifying these avalanches is detailed in the methods section.

a, b Two different avalanche size distributions at phase boundaries, with both distributions obtained at Cth = 1, but different input voltages (Vin = 9.96 V for (a), and 13.46 V for (b)). c Phase diagram of the thermal neuristor array, with the y-axis depicting the relative value of Cth compared to its experimentally estimated level. We observe synchronized rigid states with collective spiking and quiescent states with no spikes (no activity). Near the phase boundaries, a robust power-law distribution in avalanche sizes is noted across various parameters, signaling the existence of LRO. d Exponents of the power-law fit of the avalanche size distributions (omitting the negative signs for clarity). The phase diagram from (c) is superimposed for enhanced visualization. Regions lacking a colored box signify a failed power-law fit, and exponents are capped at 6 to exclude outliers. Source data are provided in the Source Data file.

We varied the input voltage and thermal capacitance, Cth, to generate the phase diagram depicted in Fig. 3c. Here, the y-axis reflects Cth’s relative value against the experimentally estimated one. Similar to observations in Fig. 2, both a synchronized rigid state, characterized by collective neuristor firing, and a quiescent state, with no spiking activity, are found. Around the phase boundaries, a wide range of parameters leads to a power-law distribution in avalanche sizes across several orders of magnitude, confirming the existence of LRO. This is further supported in Fig. 3d, where we compute avalanche sizes for each point in the parameter space and plot the absolute value of the exponent from the fitted power-law distribution. Areas without a colored box indicate an unsuccessful power-law fit, with the maximum exponent limited to 6 to remove outliers.

While we empirically observe power-law scaling in avalanche sizes, one might question if this implies criticality and scale-invariance. The numerical evidence presented here suggests otherwise. First, the power-law distributions in Fig. 3a and b do not align with the finite-size scaling ansatz39,43, which predicts diminishing finite-size effects with increasing system size. Furthermore, a rescaling based on the system size should collapse all curves onto one21,39 for scale-invariant systems, but such an effect is notably missing in our system, contradicting finite-size scaling expectations. In Supplementary Fig. 9, we present the results of attempted finite-size scaling, which clearly imply a lack of scale-invariance.

Despite the absence of criticality, can the system still perform some computing tasks effectively? Is the LRO observed in these thermal neuristor arrays even necessary for such tasks? We demonstrate in the following section that for classification, LRO, let alone criticality, is not necessary, as anticipated in23.

Role of LRO in reservoir computing classification tasks

We apply our thermal neuristor array to reservoir computing (RC) to answer the above questions. RC differentiates itself from traditional neural network models by not requiring the reservoir – the network’s core – to be trained. The reservoir is a high-dimensional, nonlinear dynamical system. It takes an input signal, x, and transforms it into an output signal, y = f(x). A simple output function, usually a fully connected layer, is then trained to map this output signal, y, to the desired output, \(\hat\bfz=g(\bfy)\). Training typically involves minimizing a predefined loss function between the predicted output \(\hat{{{\bfz}}}\) and the actual label z, associated with the input x, using backpropagation and gradient descent. If the output function is linear, training can be reduced to a single linear regression.

The reservoir’s transfer function f can be arbitrary, with its main role being to project the input signal x into a high-dimensional feature space. Since the reservoir doesn’t require training, employing an experimentally designed nonlinear dynamical system like our thermal neuristor array for RC is both effective and straightforward.

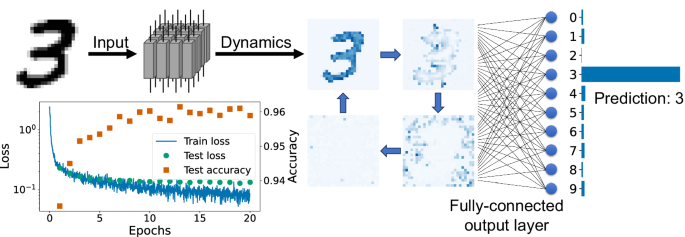

As a practical demonstration, we applied RC using thermal neuristors to classify handwritten digits from the MNIST dataset44. Each 28 × 28 grayscale pixel image, representing digits 0 to 9, is converted into input voltages through a linear transformation. The system is then allowed to evolve for a specific time, during which we capture the spiking dynamics as output features from the reservoir. Subsequently, a fully connected layer with softmax activation is trained to predict the digit. This process is schematically represented in Fig. 4.

The MNIST handwritten digit dataset44 is used as a benchmark. Each image from the dataset is translated into input voltages for a 28 × 28 thermal neuristor array. The array’s spiking dynamics are gathered as the reservoir output. A fully connected output layer, enhanced with softmax nonlinearity, is trained to classify the digit. The bottom-left panel illustrates the training process, displaying both loss and accuracy, culminating in a final test set accuracy of 96%. Source data are provided in the Source Data file.

The output layer was trained over 20 epochs, as shown in the bottom-left panel of Fig. 4. The test loss stabilized after approximately 10 epochs, and due to the network’s simple architecture, overfitting was avoided. Ultimately, the test set accuracy reached 96%. Further training details can be found in the methods section.

In this experiment, we treated the voltage transformation, thermal capacitance Cth, and noise strength σ as adjustable hyperparameters. This allowed us to check which region of phase space would produce optimal results. We found that the parameters that yielded optimal performance were an input voltage range between 10.5 V and 12.2 V, Cth = 0.15, and noise strength σ = 0.2 μJ ⋅ s−1/2. These settings placed us within the synchronized rigid phase, not the LRO one, with the input voltage variations introducing complex oscillatory patterns. In fact, choosing the parameters in the LRO phase produced worse results. We show this in Appendix C4 of the SI. The phase diagram relating to noise strength can be found in Supplementary Fig. 10, and videos of collective oscillations under image inputs are available in Supplementary Movie 2.

To further explore the role of LRO in reservoir computing tasks, Appendix C5 of the SI details our efforts to eliminate LRO within the reservoir by either removing interactions between neurons altogether or by reducing memory. We quantified LRO using the avalanche size distribution under these various settings. The findings reveal that even when the reservoir operates in a rigid or non-interacting state, long-range structures inherited from the dataset are still apparent. However, no relation between LRO and computational performance was observed. Videos demonstrating collective oscillations under these conditions are available in Supplementary Movies 3 and 4.

As further verification, Appendix C6 of the SI documents an additional experiment involving the prediction of chaotic dynamics governed by the 2D Kuramoto-Sivashinsky equations45. The results corroborate our primary findings: optimal performance in reservoir computing is achieved without the presence of LRO within the reservoir.

In conclusion, the spiking dynamics of the optimally performing reservoir in our study are not characterized by an LRO state. This observation aligns with the findings in23, and challenges the well-accepted critical brain hypothesis14 and theories suggesting that near-critical states enhance computational performance46,47. However, our results do not directly contradict the critical brain hypothesis, since it is possible that long-range correlations are effectively encapsulated within the feed-forward layer. Despite this possibility, our findings highlight a crucial aspect: criticality is not a prerequisite for effective computational performance in such tasks.

link